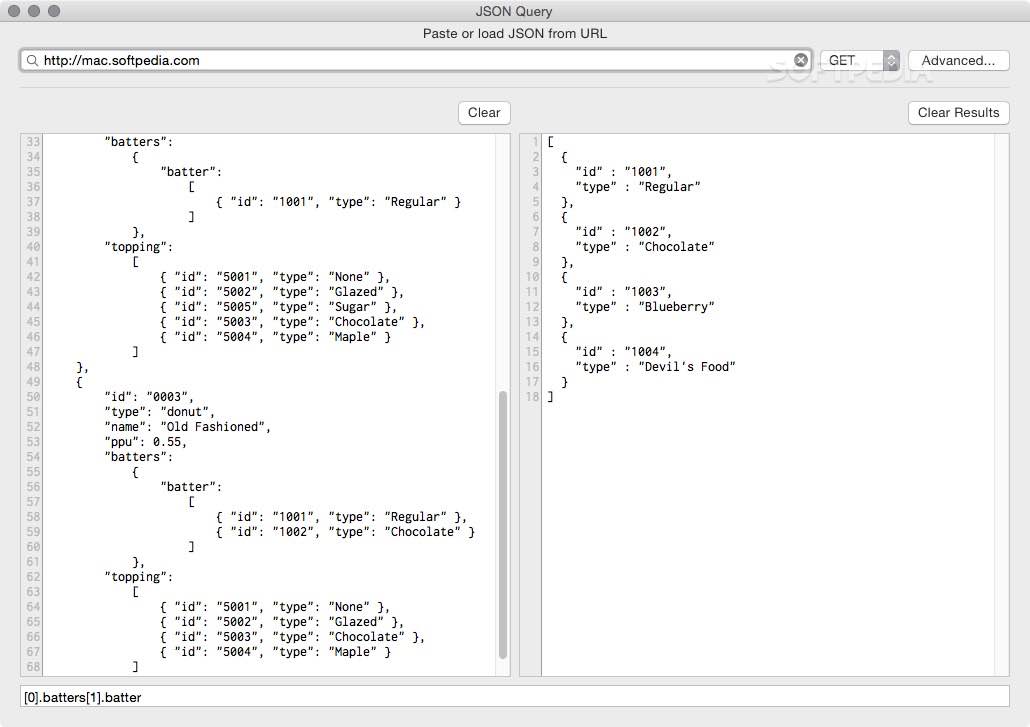

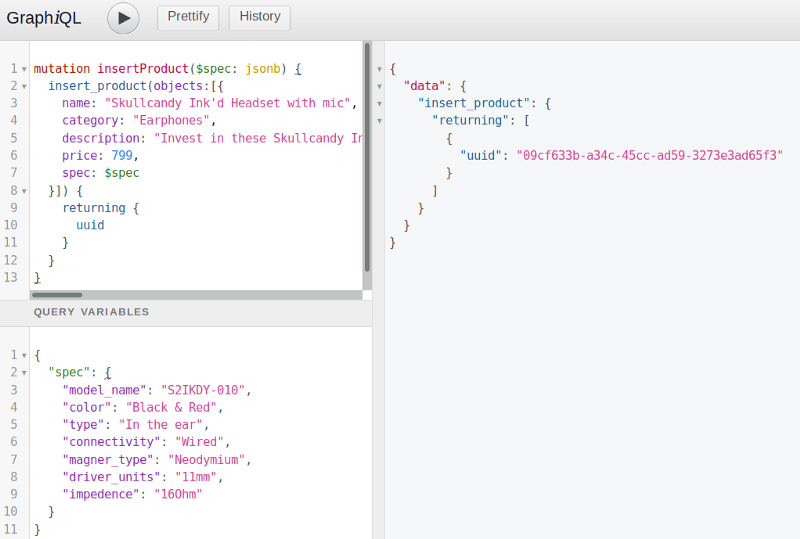

In my case, instead of retrieving a few hundred byte, it would force getting 60KB for each query. It’d leave only unattractive alternative of getting the entire JSON document. Unless I’m doing something incorrectly, this makes retrieval of individual key completely useless for practical cases. Looks like every record gets parsed separately for each key. The explain statement for 5 fields looks normal When we analyzed the issue, we found that few inserts and select are taking some time to finish. We are facing few performance impact because of this table. If I add additional field to the list of fields, execution time increases linearly - retrieving 2 fields take ~310 ms, 3 fields ~ 465 ms and so on. Currently, in our application, we are storing the JSON data as Text type in PostgreSQL. In my case, instead of retrieving a few hundred byte, it would force getting 60KB for each It’d leave only unattractive alternative of getting Retrievalof individual key completely useless for practical cases.

Unless I’m doing something incorrectly, this makes retrieving 2 fields take ~310 ms, 3 fields ~ 465 ms and so on. If I add additional field to the list of fields, execution time increases linearly I observed performance anomaly with JSON that I believe is incorrect in version 10, although didn’t test earlier ones.Ī simple query works fine on a table of 92,000 records with ~60KB average record size.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed